- Chennai

- Election-2026Election-2026

- Tamil Nadu

- National

- World

- Cinema

- Business

- Sports

- Lifestyle

- Technology

- Videos

- Explainers

When a financial services company recently began using Cursor, an AI technology that writes computer code, the impact was immediate. The company went from producing 25,000 lines of code a month to 250,000. This created a backlog of 1 million lines needing review, according to Joni Klippert, CEO of security startup StackHawk.

“The sheer amount of code being delivered, and the increase in vulnerabilities, is something they can’t keep up with,” she said. As development accelerated, other departments like sales and marketing were forced to pick up the pace, creating “a lot of stress.”

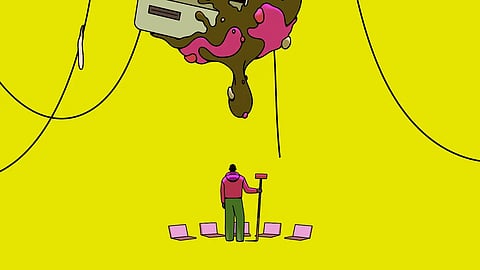

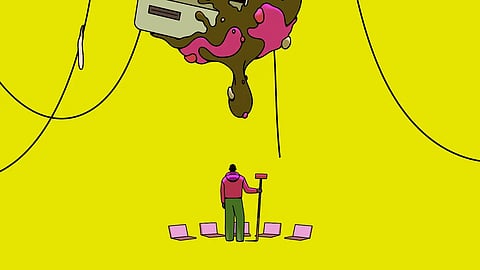

Since AI coding tools from Anthropic, OpenAI, and Cursor gained traction last year, one result has become apparent: code overload. Aided by these tools, tech workers are producing software so quickly it has become unmanageable. With almost anyone now able to spin up software ideas in hours, companies are struggling to deal with the glut.

In Silicon Valley, many see this as a new reality. Some say the tools grant them "coding superpowers," allowing them to focus on software concepts rather than the arduous build. However, there are not enough engineers to review this explosion of code for mistakes.

Recruiters are increasingly seeking senior engineers experienced in spotting errors and monitoring software for risks. Open-source projects have been inundated with AI-enabled additions, and flaws in the code frequently lead to security vulnerabilities or system crashes.

“The blessing and the curse is that now everyone inside your company becomes a coder,” said Michele Catasta, president and head of AI at Replit.

A Google survey in September found that 90% of software developers use AI to assist their work, while 71% of those who write code specifically use AI for that task. This widespread adoption has fueled fears that AI could replace many engineers. Tech giants, including Pinterest, Block, and Atlassian, have recently cut thousands of jobs, citing efficiencies created by AI.

“Projects that once required hundreds of engineers can now be done by tens,” Andrew Bosworth, Meta’s chief technology officer, told employees in an internal memo. “Work that used to take months can now take days.” He added that AI has “profound consequences” for organisational structures.

Until recently, the process of turning ones and zeros into programs was meticulous. Engineers pored over complicated languages and might write only a few dozen lines of vetted, bulletproof code a day. AI advancements changed this with the rise of "agents"—AI-powered robots that create software largely on their own.

Early versions from startups like Cursor showed promise, but in November, coding agents "levelled up." Leading AI firms Anthropic and OpenAI released updated versions of the software powering Claude Code and Codex. Tech workers discovered these agents had evolved from occasionally helpful partners to full-fledged code-generating wizards. With minimal human guidance, an AI agent can write a program in a fraction of the time required by humans. The result is a deluge.

Many companies are now dealing with the ripple effects. AI-generated code must be tested for bugs, security, and compliance, but it is often unclear who is responsible for fixing issues. Historically, responsibility lay with the creator.

Now, companies are struggling to hire enough application security engineers to monitor risks. “There are not enough application security engineers on the planet to satisfy what just American companies need,” said Joe Sullivan, an adviser to Costanoa Ventures. Large firms would hire five to ten more people in these roles immediately if they could, he noted.

Other problems are more technical. AI coding tools often perform better on laptops than in secure, web-based environments owned by Amazon or Microsoft. Consequently, more engineers are downloading their company’s entire codebase to personal hardware, creating a massive security risk if a laptop is lost or stolen.

“That’s an example of a crazy risk no one thought of six months ago that they’re trying to solve right now,” Sullivan said.

Sachin Kamdar, a co-founder of Elvex, an AI agent startup, said he created a rule around 16 months ago that all of the company’s code needed to be reviewed by a human. Otherwise, problems would be harder to fix because no one would understand the work that AI had done.

“It’s just going to break something, and they’re not going to know why it broke,” he said.

The New York Times